Home » Posts tagged 'wellness outcomes'

Tag Archives: wellness outcomes

Aon channels Britney Spears in Lyra report

An open (and also sent, received, read and unobjected to) letter to Aon’s chief actuary, Ron Ozminkowski.

Dear Mr. Ozminkowski,

It seems that there are always some rookie mistakes in your analyses. Either that, or you are simply “showing savings” because your clients are oxymoronically paying you as “independent actuaries” specifically to show savings. I will assume that your mistakes are just rookie mistakes, rather than deliberate misstatements. Yet as I recall, you never fixed your Accolade analysis after it was pointed out that your own assumptions, when correctly analyzed by someone whose IQ possesses that critical third digit, inexorably led to the opposite conclusion: Accolade loses money.

Perhaps that bug is a feature for your clients, and indeed your job description is to “show savings.” Mine is the opposite: to demonstrate integrity.

If I am wrong and you are genuinely trying to do the right thing, I would be happy to fly out there and teach you people how to do arithmetic, because, in the immortal words of the great philosopher Britney Speers, oops, you did it again. This time for Lyra. With all the money they paid you, it seems like they should be able to expect correct analysis. They might be very disappointed in you.

On the off-chance that you’d like to see what a real study design looks like in mental health, Acacia Clinics would be a good one to review. Here is the Validation Institute report and here is the science underpinning it.

If you are quite certain your arithmetic is correct despite all indications to the contrary, I would invite you to bet. I say that Acacia Clinics study design and analysis is correct and your study design and analysis is wrong. You say the opposite. Here are the rules for the bet. If you won’t bet, you are of course conceding that Acacia’s analysis is correct and your analysis, to use a technical biostatistical term, sucks.

I am already finding five rookie mistakes, and I’ve only read the first five pages.

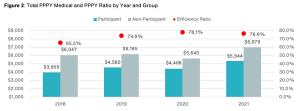

First take a looksee at this screenshot below. I was having a lot of trouble figuring out how the red dots showing something you’ve dubbed the “efficiency ratio” (a term which apparently has no meaning in health services research, as far as Google is concerned, while ChatGPT thinks it means something else altogether, but what do they know?) were related to the differences in the size of the bars. Then I realized you accidentally started the y-axis at $4000 instead of $0. A rookie mistake, which inadvertently makes the alleged savings look about 3 times higher than they are.

Meaning your so-called “efficiency ratio” is the value in the blue bar as a percentage of the gray bar, not the height of the blue bar as a percentage of the gray bar. Call me a traditionalist, but in my humble opinion those two ratios should be the same. (Note: apologies for the blurry screenshot. That’s how it’s reproducing.)

I did notice that later on, pretty much the same data in Figure 1 was reproduced as Figure 2, but this time you started the y-axis at $1000. So you’re definitely getting warmer!

Happy New Years!

Second, you may want to check your calendar, because it is now 2024. Your analysis ends at 2021. You’ve had almost two years and five full months plus a Leap Day to update it and yet, you cut it off in 2021. A cynic might conclude that you picked that end date because the alleged benefit you are claiming regresses further to the mean in 2022 and 2023.

Looks like you threw up in front of Dean Wormer

Third, speaking of regressing to the mean, the reason a cynic could infer that conclusion is, your so-called “efficiency ratio” already was regressing to the mean. Let’s assume, for now, the unassumable: that your “matched controls” are a legitimate study design. (If it were, the FDA would allow it.) Between 2018 and 2021, according to your own numbers on that chart, participant costs rose 31% while non-participant cost rose 22%. And yet somehow that statistic appears nowhere in your report, once again a rookie mistake.

Are you having connectivity issues?

Fourth, there are two types of outcomes researchers in our industry. Those who think “matched controls” are a valid study design for this kind of analysis, and those who have a connection to the internet. If you can’t afford my seminal book, try this article on the Validation Institute website which proves – using fifth-grade arithmetic – why that methodology doesn’t work. Period.

Perhaps the giveaway why “matched controls” don’t work in this case might be that the savings started on the first day of the baseline year. An employee has one phone call (yes, that was the cutoff point to get into the study group, though some people had many more) with one of Lyra’s “220,000 high-quality providers” and their medical spending drops precipitously. I’d also love to know what Lyra’s Secret Sauce is, that lets them retain 220,000 providers, all of whom are “high-quality.”

The following things change immediately as a result of that call, even though they are not part of the conversation and require a real doctor or in the case of ER visits, a great deal of luck:

- Non-mental health emergency visits plunge by 30%

- Generic drug scripts plunge by 30%

- By 2021, even expensive specialty meds fall by more than 20%

You might want to retain a smart person to explain the difference between correlation and causation. Alternatively, perhaps you are concerned that this meteor almost hit the visitors center?

A mystery wrapped inside a riddle wrapped inside a seven-figure consulting fee

Fifth, consider that Aon has data for:

- medical claims

- diagnoses

- professional mental health spending

- inpatient mental health spending

- outpatient mental health spending

- spending on non-mental health

- ED and inpatient visits

And consider that:

- They did this study for Lyra

- The study is called “Lyra Cost Efficiency [sic*] Results”

- The “Workforce Mental Health Program information was provided by Lyra Health”

Yet somehow – despite having the aforementioned two years, five months and a leap day to prepare this study – they claim to have absolutely no idea how much Lyra’s services cost:

We suspect it is a lot, perhaps enough that mental health professional fees with Lyra for participants exceed mental health spending by non-participants. Because in addition to having to pay their “evidence-based therapists” (Lyra’s term), sales, marketing, overhead and profit, Lyra needs to pay off the benefits consultants too, to “partner with” them:

Finally, where’s the guarantee of credibility? Does Aon not stand behind its work? I guess that’s a wise move on your part, because if you did, I’d be rich. By contrast, Acacia Clinics was validated by the Validation Institute (VI). They do stand behind their work, so the VI’s findings on Acacia Clinics’ outcomes are backed by a $100,000 Credility Guarantee. That, of course, is in addition to my own guarantee.

Did Mr. Ozminkowski just damage Lyra’s reputation…and Aon’s own?

The irony here is that Lyra is considered (or was considered, until this report) a perfectly legit vendor that is providing a valuable service of connecting employees to mental health professionals that match their needs. That is especially useful these days, when mental health benefits are very skinny and mental health providers are hard to come by. The “ROI” is employee appreciation, and possibly higher productivity. Not magical reductions in medical spending completely unrelated to the issues they are calling about.

Paying off consultants (who coincidentally also send them business) to pretend otherwise could damage that reputation. A rookie mistake on their part.

Further, there are some really smart, really honest consultants at Aon. But just like one dirty McDonalds would sully all of them, organization as a whole suffers when one consultant goes rogue.

*It’s either “efficiency,” meaning the cost vs. the benefit, or “cost-effectiveness.” “Cost efficiency” is redundant. They really shouldn’t need me to tell them that – or, for that matter, anything else in their report.

HERO (Health Enhancement Research Organization) Crowdsources Arithmetic

This is the third in a series deconstructing the Health Enhancement Research Organization’s (HERO) attempt to replace the basic outcomes measurement concepts presented to the human resources community in Why Nobody Believes the Numbers with a crowdsourced consensus version of math. The first installment covered Pages 1 to 10 of their outcomes measurement report, where HERO shockingly admitted wellness hurts morale and corporate reputations. The second installment jumped ahead to page 15, where HERO shockingly admitted wellness loses money. This report covers pages 11-13. Next week we shall be covering Page 14.

Spoiler Alert: The wellness industry believes that math is a popularity contest. (We have a million-dollar reward if they can show that’s true. More on that later.)

All the luminaries in the wellness industry got together to crowdsource arithmetic, and put their consensus (a word they use 50 times) in an 88-page report. Unfortunately, math is not a consensus-based discipline, like democracy. It is not even an evidence-based discipline, like science. It is a proof-based discipline. A methodology that doesn’t work in hypothetical mathematical circumstances is proven wrong no matter how many votes it gets.

The pages in question list 7 “methodologies” for measuring outcomes. To begin with, consider the absurdity of having 7 different ways to measure. Imagine if you asked your stockbroker how much money you made last year, and were told: “Well, that all depends. You could measure that seven different ways. And by the way, six of those ways will overstate your earnings.” Math either works or it doesn’t. There is only one right answer.

Methodology #1: “Cost Trend Compared with Industry Peers”

This methodology “may require consulting expertise.”

As a sidebar, one of the many ironies of this HERO report is that most of these methodologies emphasize the need for actuarial or consulting “inputs” or “analytic expertise”…and yet no mention was made on Page 10 of the cost of this expertise when all the elements of cost were listed. While not mentioned as a cost element, consulting firms are very expensive And even if consulting were free, we generally recommend hiring only consultants to do outcomes report analysis who are certified in Critical Outcomes Report Analysis by the Validation Institute.

By contrast, Staywell and Mercer offer an example of what happens when you as a buyer use non-certified “consulting expertise” to evaluate a vendor. Here’s what happens: the vendor wins. Needless to say, Staywell showing savings 100x greater than what Staywell itself said was possible simply by reducing a few employees’ risks raises a lot of questions. But despite repeated requests and offers of honoraria to answer these questions, Mercer wouldn’t answer and the only response Staywell gave us was to accuse us of bullying them. Staywell and Mercer held firm to the Ignorati strategy of not commenting—even though Mercer was representing the buyer (British Petroleum), not the vendor. Oh, yes—both Staywell and Mercer are represented on the HERO Steering Committee.

To HERO’s credit, they do admit the obvious for Methodology #1: If all your peers are using the same vendors, who recommend the same worthless annual checkups, the same overscreening/overdiagnosis, the same lowfat(!) diets, and the same consultants to evaluate all the phony savings attributable to these checkups, diets, and biggest-loser contests, obviously you’ll get the same results. And since trend is going down everywhere (including Medicare and Medcaid, which have no wellness), everyone gets to “show savings.”

Methodology #2: “Inflection on expected cost trend.”

Mercer has been a big proponent of this methodology, as in the previous Staywell example. At one point they used “projected trend” to find mathematically impossible savings for the state of Georgia’s program even though the FBI(!) later found the program vendor, APS, hadn’t done anything. In North Carolina, they projected a trend that allowed them to show massive savings in the state’s patient-centered medical home largely generated, as luck would have it, by a cohort that wasn’t even eligible for the state’s patient-centered medical home.

Comparing to an “expected” trend is one of the most effective sleight-of-hand techniques in the wellness industry arsenal. Every single published study in a wellness promotional journal comparing results to “expected trend” has found savings. And have you ever hired a consultant or vendor to compare your results to “expected trend” who hasn’t found “savings”? We didn’t think so.

QED.

Methodology #3: “Chronic vs. non-chronic cost trend.”

The funny things about this methodology are twofold.

First, the HERO Committee already knows this methodology is invalid because it was disproven in Why Nobody Believes the Numbers (and I offered an unclaimed $10,000 reward for finding a mistake in the proof). We know that people on the Committee have read my book because at least one of them – Ron Goetzel – used to copy selected pages from it until the publisher, John Wiley & Sons, made him stop. Methodology #3 was the fallacy on which the entire disease management industry was based. I myself made a lot of money measuring outcomes this way, until I myself proved I was wrong. At that point, integrity being more important to me than money, I changed course abruptly, as memorably captured by Vince Kuraitis’ headline: Founding Father of Disease Management Astonishingly Declares: “My Kid Is Ugly“. (Naturally the benefits consulting industry filled the vacuum created by my withdrawal from this market, and plied their clients with worthless outsourced programs that more than coincidentally generated a lot of consulting fees.)

If you had perfect information and knew who had chronic disease (before the employees themselves did) and everyone stayed put in either the non-chronic or chronic categories, you could indeed use non-chronic trend as a benchmark, mathematically (though the epidemiology is still very squirrelly). The numbers would add up, at least in a hypothetical case.

But we can’t identify anywhere near 100% of the employees who have chronic disease. Absent that perfect information, any fifth grader could understand the proof that this methodology is fabricated, as follows. Assume that 10 people with a chronic disease cost $10,000 apiece both in the baseline and in the study period. Their costs are therefore flat. The program did not reduce costs between periods.

Now add in 10 people with undetected chronic disease as the “non-chronic benchmark.” Maybe they are ignoring their disease, maybe they don’t know they have it, maybe they are misdiagnosed, maybe the screen was wrong (vendor finger-pricks are very unreliable). Assume these 10 people cost $5000 in the baseline…but they have events in the study period so their costs become $10,000.

That makes the “non-chronic trend” 100%! Suddenly, the program vendor looks much better because they kept the costs of the chronically ill cohort constant even though the “benchmark” inflation was 100%.

Second, Why Nobody Believes the Numbers has already shown how to make this methodology valid mathematically (though the epidemiology applied to that math might still be squirrelly, and there could still be random error in non-hypothetical populations). You simply apply a “dummy year analysis” to the above example. So do exactly what is described above, but for a year-pairing before the program. Then you’ll know what the regression-to-the-mean bias is, and apply that bias to the study years. So If in fact the “non-chronic trend” is always 100% due to the people with unrecognized chronic disease, you would take this trend out of the benchmark non-chronic population before applying that trend to the chronic population. In this case, as in every case, the bias is eliminated. This is called the Dummy Year Adjustment. (Chapter 1 of Why Nobody Believes the Numbers offers several examples of the DYA.)

Proofs are best understood to be proofs if accompanied by rewards, since only an idiot would monetarily back a proof that wasn’t a proof. So here’s what we propose for this one: I’ll up my $10,000 reward to $1,000,000. A panel of Harvard mathematicians can decide who is mathematically right. The HERO Committee escrows a $100,000 nuisance fee for wasting my time and paying for the panel if they are wrong. (We’ll pay if we lose.) They present Methodology #3. We lose the $1,000,000 if the panel votes that this HERO methodology is valid without our “Dummy Year Adjustment.”

My challenge: Either collect your $1,000,000, or publicly apologize for proposing a methodology which you know to be made up. Or is offering you a million dollars “bullying,” a word defined very non-traditionally in this field? Our bad.

Yes, we know this sounds like a big risk but you might remember the old joke:

Science teacher: “If I drop this silver dollar into this vat of acid, will it dissolve?”

Student: “No, because if it would, you wouldn’t do it.”

Methodologies 4 and 5: The Comparison of Participants to Non-Participants

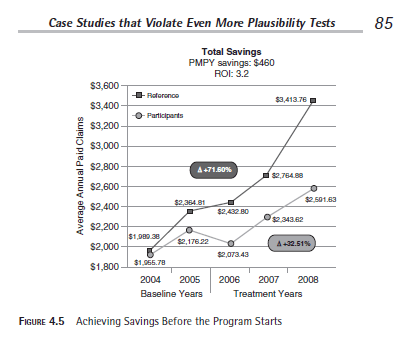

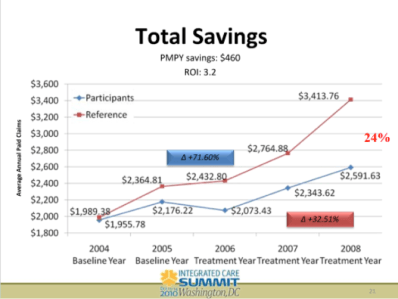

Besides not making any intuitive sense that active motivated engaged participants are somehow equivalent to inactive unmotivated non-participants, Ron Goetzel already admitted this methodology is invalid. Health Fitness Corporation, accidentally proved that on the slide below.

Note that they “matched” the participant (blue) and reference (red) groups in the 2004 “baseline year” but didn’t start the “treatment” until 2006. However, in 2005, they already achieved 9% savings vs. the “reference group” even without a program. This “mistake” was in plain view, and was pointed out to them many times, politely at first. Page 85 of Why Nobody Believes the Numbers showed it, but as the screenshot below shows, I was too polite to mention names or even to call it a lie, figuring that as soon as Health Fitness Corporation or Ron Goetzel saw it, they/he would be honest enough to withdraw it.

Not knowing the players well, I naively attributed the fact that HFC used this display to a rookie mistake, rather than dishonesty. That was plausible because rookie mistakes are the rule rather than the exception in this field. (As we say in Surviving Workplace Wellness, the good news about wellness vendors is that NASA employees don’t need to worry about their job security because these people aren’t rocket scientists.)

On the advice of colleagues more familiar with the integrity of the wellness industry true believers, I also tried a test of the rookie-mistake hypothesis: I strategically placed the page with this display next to the page that I knew Ron Goetzel would be reading (and copying), a page whereon I complimented him on his abilities. I might the the world’s only bully who publicly compliments his victims and offers to pay them money:

That way, I would know that if Mr. Goetzel and his Koop Committee and their sponsors HFC didn’t remove this display, it was due to a deliberate intentiion to mislead people, not an oversight or rookie mistake.

Sure enough, that display continued to be used for years. Finally, a few months ago, faced with the bright light of being “bullied” in Health Affairs, HFC withdrew the slide. Ron “the Pretzel” Goetzel earned his moniker, twisting and turning his way around how to spin the fact that this “mistake” was ignored for so long despite all the times it had been pointed out. He ending up declaring the slide “was unfortunately mislabeled.” He gave no hint as to who did the unfortunate mislabeling, despite being repeatedly asked. We suspect the North Koreans. The whole story is here.

Summary and Next Steps

The first five of these methodologies in Pages 13-14 have several things in common:

- They all contradict the 6th methodology;

- They contradict the statement on page 15 that the only significant savings is in reducing admissions. Of course, self-contradiction is embedded in Wellness Ignorati DNA. To paraphrase the immortal words of the great philosopher Ned Flanders, the Wellness Ignorati “believe all the stuff in wellness is true. Even the stuff that contradicts the other stuff.”

- They call for megadoses of consulting and analytic expertise, contradicting the list on Page 10 that omits the cost of outside expertise.

Speaking of Methodology #6, our next installment will cover it. It’s called event-rate based plausibiltiy testing. I would know a little something about that methodology, since I invented it. I am flattered that the Wellness Ignorati, seven years later, are finally embracing it. I am even more flattered that they aren’t attributing authorship to me. No surprise. That’s how the Wellness Ignorati got their name – by ignoring inconvenient facts. Ignoring facts means they cross their fingers that their customers don’t have internet access. Customers who do can simply google on “plausibiltiy test” and “disease management” and see whose name pops up.

HERO Report: Wellness Industry Leaders Shockingly Admit that Wellness Is Bad for Morale

(March 14) This is the first in a series looking at the strengths and weaknesses of the HERO Outcomes Guidelines report, recently released by the Wellness Ignorati. One explanatory note: A comment accused us of insulting the Wellness Ignorati by calling them that. It is not an insult. It describes their brilliant strategy of ignoring facts and encouraging their supporters to do the same. We are very impressed by the disclipline with which they have executed this strategy, and will be providing many examples. However, if they prefer a different moniker to describe their strategy, they should just let us know.

We encourage everyone to pick up a copy of this report, the magnum opus of the Wellness Ignorati. Unlike the Ignorati, we are huge advocates of transparency and debate (which they call “bullying”). We want employers to see both sides and decide for themselves what makes sense, rather than spoon-feed them selected misinformation and pretend facts don’t exist.

The latter is the strategy of the Wellness Ignorati. Indeed, they earned their moniker by making the decision to consistently ignore inconvenient facts. (This is actually a smart move on their part, given that basically every fact about wellness is inconvenient for them.) For example, they just wrote 87 pages on wellness outcomes measurement without admitting our existence, even though we wrote the only book — an award-winning trade best-seller — on wellness outcomes measurement. Observing the blatant suppression of facts and the loss of credibility that comes with blatantly suppressing facts is just one of the many reasons to read this report. In total, their report provides a far more compelling argument against pry, poke, prod and punish programs than we ourselves have ever made, simply by bungling the (admittedly impossible) argument in favor of them.

There is too much fodder for us to deconstruct in one posting, so over the next several weeks we will highlight aspects of this report that we think are especially revealing about the sorry state of the wellness industry.

In terms of getting off to a good start, the Ignorati are right up there with Hillary Clinton, with their first self-immolation appearing on Page 10. Remember our mantra from Surviving Workplace Wellness: In wellness, you don’t have to challenge the data to invalidate it. You merely have to read the data. It will invalidate itself.

And sure enough…

Ironically, the first self-immolation is the direct result of that rarest of qualities among the Ignorati: integrity. We were shocked by the revelation that the Ignorati actually realize that employee morale and a company’s reputation both suffer when companies institute wellness programs – but here is the screenshot. Both morale and reputation are listed, as “tangential costs.”

Try telling a CEO that the morale of his workforce and his corporate reputation are “tangential” to his business. We ourselves run a company, and we would not list low morale as a “tangential cost.” Quite the opposite — our entire business depends on our employees’ intrinsic motivation to do the best job they can. If their morale suffers, our profit suffers. That’s why we would never institute a wellness program. The last thing we want to do is impact our morale in order to measure our employees’ body fat. Obviously, it is harder to hire and retain people if you value body fat measurement over job performance, and we are pleased to see the ignorati finally admit this.

Why, having now read this revelation in the ignoratis’ own words, that wellness is bad for morale, would any company still want to “do wellness”? Or as we say in Cracking Health Costs, “If you’re a general, would you rather have troops with high morale or troops with low cholesterol?”

The fact that employees hate wellness isn’t exactly a news flash. Anytime there is an article in the lay press, the public rails against wellness — or “bullies” the wellness industry, to use the term that the Ignorati use for people who disagree with them publicly. You don’t have to look far—just back to HuffPost on Wednesday. Or All Things Considered.

Obviously, if you have to bribe employees to do something (or fine them if they don’t), it’s because they don’t like it. If employees would rather sacrifice considerable sums of money than be pried, poked and prodded, they are sending you a message: “This is a stupid idea we want nothing to do with.”

The news flash is that this whole business of “making employees happy whether they like it or not,” as we say in Surviving Workplace Wellness is now acknowledged – by the Ignorati as a group — to be a charade.

HERO seems to have exhausted their integrity quota pretty quickly, because after that welcome and long-overdue and delightfully shocking admission, they slip back into character.

Specifically, in their listing of costs, they conveniently forgot a bunch of direct, indirect and “tangential” costs. Like consulting fees. Generally, the less competent and/or honest the consultants, the more they charge. (For instance, we can run an RFP for $40,000 or less, and measure outcomes for $15,000 or less — and do both to the standards of the esteemed and independent GE-Intel Validation Institute. Most other consultants can’t match either the price or the outcome.) We’re not calling any consulting firm incompetent or dishonest other than pointing to a few examples that speak for themselves, but it does seem more than coincidental that the consultants involved in this report have conveniently forgotten to include their own fees as a cost.

And what about the costs of overdiagnosis caused by overscreening far in excess of US Preventive Services Task Force guidelines? The cost of going to the doctor when you aren’t sick, against the overwhelming advice of the research community?

Still, we need to give credit where credit is due, so we must thank the Ignorati for acknowledging that wellness harms morale. It took even less time for this acknowledgement than for the tobacco companies to admit that smoking causes cancer.

Bravo Wellness Offers “Savings” by Fining Employees

With all the incompetence, innumeracy, illiteracy and downright dishonesty we’ve documented in this field and with all the employee dissatisfaction, revolts and lawsuits, one can’t help but wonder: Why?

Why would any employer do this to their employees?

Why aren’t vendors held to minimal standards of competence?

Why do vendors and consultants caught lying simply double down on the lies and/or ignore questions about their lies–knowing full well they’ll get away with it because workplace wellness has nothing to do with actual wellness so no one cares that it doesn’t work?

Why are benefits consultants allowed to lie about outcomes for their partnered vendors?

Why, after they get caught lying, do they win awards for those very same outcomes?

Why doesn’t anyone care that much of what they say and do is wrong?

Why doesn’t anyone care that poking employees with needles far more than the USPSTF advises produces no savings?

Why put up with the morale hit from disgruntled employees and possible lawsuits?

Bravo to Bravo for admitting the reason: It’s to claw back insurance money from employees by making programs so unappealing and requirements so onerous that many employees would rather forfeit their money than have anything to do with them. Here are Bravo’s exact words:

You might say, they don’t actually “admit” it. Well, obviously they aren’t going to skywrite it. But how else would one interpret this comment? Obviously they aren’t going to save money right away by “playing doctor” and poking employees with needles. Especially because they aren’t even adhering to legitimate preventive services guidelines, such as those from the United States Preventive Services Task Force (USPSTF).

They also still subscribe to the urban legend that 75% of an employer’s spending is lifestyle-related, even though that myth has long since been discredited as meaningless and misleading for employers.

Creatinine and thyroid screens are not recommended by the USPSTF, so they shouldn’t be done at all, let alone provide the basis for claiming savings. So, we have eliminated everything except the obvious: employers get to collect fines for employees who care too much about their health and/or their dignity to submit to Bravo’s offer to play doctor.

This is the classic example of wellness done to employees instead of for them. The Bravo website is sprinkled with discussions of appeals processes for employees who face punishments for the crime of weighing too much and/or other personal shortcomings having nothing to do with work performance (and precious little to do with healthcare spending during the working years)…but everything to do with transfering wealth back to the owners.

As is our policy, we offered Bravo a chance — and $1000 — to provide an alternate explanation in a timely way, which they didn’t. Two differences between that forfeiture and Bravo’s punishments: Bravo lost their $1000 (of our money) for simply being unwilling to jot down a few words, whereas they brag about fining employees $1000 (of their money) for not being able to lose weight and keep it off, which is far harder than writing down a few words. And the other difference about the $1000? Having just raised $22-million, Bravo won’t miss it.

August Update: As a result of this expose, Bravo has taken all this stuff off their website. They no longer brag about fining employees, they no longer discuss their appeals process at length on their site and they no longer pitch their D-rated lab tests like creatinine and thyroid. This is typical vendor behavior after getting caught. Score one for They Said What.

Ron Goetzel and Co-Authors Claim Workplace Wellness Evidence That a CSI Couldn’t Find

Questions for Ron Goetzel and co-authors based on September 2014 article

Category: Wellness

Short Summary of Goetzel Article’s Marketing Claim:

“Evidence accumulated over the past three decades shows that well-designed and well-executed programs that are founded on evidence-based principles can achieve positive health and financial outcomes.”

(This study was paid for by American Specialty Health, a successful and well-regarded company in the alternative network business that also, not surprisingly, has a wellness subsidiary.)

Materials Being Reviewed:

The study in question appeared in a recent issue of the Journal of Occupational and Environmental Medicine.

Most of these questions were originally asked by Jon Robison of Salveo Partners, in this post.

Questions for Ron Goetzel (who has not answered any relevant follow-up question asked of him about his Koop Award either, meaning now he has forfeited $2000 in honoraria)

Is it ethical to claim “no conflict of interest” in writing this article when a wellness company paid you for it and when you and most co-authors make their living in the wellness industry?

ANS: Refused to answer

Can you explain your reasoning for listing (see below) the Koop Award-winning State of Nebraska as a “best practice wellness program” after they admitted lying about saving the lives of cancer victims who never had cancer, and after it turned out their savings figures were clinically and mathematically impossible, and after it was exposed that the state’s wellness vendor sponsors the Koop Award?

ANS: Refused to answer

Why didn’t you disclose that literally none of these “best practice” programs (especially Nebraska’s, which deliberately waived all age-related cancer screening guidelines) follow US Preventive Services guidelines and therefore companies that follow these best practices on balance are more likely to harm their employees through overdiagnosis than benefit them?

ANS: Refused to answer

You describe (among others) a Procter & Gamble study from two-decade-old data as “recent”. Can you define “recent” ? Can you name anyone at Procter & Gamble who even remembers this “recent” study?

ANS: Refused to answer

Why do you still cite Larry Chapman’s 25%-savings-from-wellness-programs allegation even though readily available online government data below shows wellness-sensitive medical events account for only 8.4% of a typical employer’s hospital cost (about 4% of total employer spending), thus making it impossible to save 25%?

ANS: Refused to answer

Why are you still citing Prof. Baicker’s article when she herself has backed off it three times, it’s never been replicated, and all attempts to replicate it, including the most recent attempt to replicate it (in the “American Journal of Health Promotion”), have shown the opposite and she herself says “there are very few reliable studies to confirm the costs and the benefits”?

ANS: Refused to answer

How can you cite RAND’s negative article as supporting the conclusion that “wellness can achieve positive financial outcomes” even though the author Soeren Mattke has specified that the modest health improvements among active participants produced no “positive financial outcomes”?

ANS: Refused to answer

Likewise, how can you cite the Pepsico health promotion study in Health Affairs in support of that same conclusion when that study concluded exactly the opposite: that health promotion had a negative ROI?

ANS: Refused to answer

Guest question submitted by Dr. Jon Robison: On p 931 you say that the RAND study found weight reduction — of course, only on active participants, excluding dropouts and non-participants — that was “clinically meaningful” and “long-lasting.” How does that square with this slide from that very same RAND study showing exactly the opposite? (Since this chart may be difficult to read,we’ll highlight the key finding, which was that by the 4th year the average active participant had sustained weight loss of only a few ounces.)

ANS: Refused to answer

Orriant publishes wellness data in Journal of Workplace Health Management and no one cares

Orriant, Ray Merrill

Category: Wellness

Short Summary of Company’s Marketing Claim:

“A New Scientific Study Proves Wellness Works”

Materials Being Reviewed:

http://www.orriant.com/File/4072ee6c-2bcd-43a5-83b6-e9035c8c0f1a

Questions for Orriant and Ray Merrill:

When you say “a new internationally-published study proves wellness works,” are you taking into account that the “international” journal publishing the work has a Zero impact factor, meaning that essentially no one believes anything they publish has enough value to cite?

ANS: Refused to answer

Are you attributing the fact that “participants had fewer health claims than non-participants” to your program, rather than to the obvious non-observable variable that participants are motivated whereas non-participants are not?

ANS: Refused to answer

Are you familiar with Health Fitness Corporation’s demonstration that participants will outperform non-participants even in the absence of a program? (See the year 2005 below — no program but participants outperformed non-participants nonetheless.)

ANS: Refused to answer

You also note that “those with the greatest health risks” had the most improvement.” Are you familiar with Dee Edington’s work that says those with the greatest health risks will improve the most even in the absence of a program, due to the natural flow of risk?

ANS: Refused to answer

Non-participants’ medical costs were “2.9x greater” (about $4000 vs. about $1400). This, of course, is the record for the hugest savings ever claimed from a wellness program. Since government data shows that wellness-sensitive medical events account for only 4% of total costs or about $200/person, where did the other $2400/person in savings come from?

ANS: Refused to answer

Why didn’t the authors plausibility-check the entire population using a wellness-sensitive medical event analysis?

ANS: Refused to answer

Viverae wellness primes its own pump for an EEOC wellness lawsuit

Viverae

Category: Wellness

Short Summary of Company:

“Viverae gives our clients a platform for managing healthcare costs by motivating their employees to make healthy choices. Our comprehensive wellness programs address your organization’s goals to meet your employees where they are.”

Materials Being Reviewed:

Questions for Viverae:

General: What customers have actually signed up for this and are willing to admit it?

ANS: Refused to answer

Provision #2: Since your biometrics are out of compliance with USPSTF guidelines, wouldn’t a customer be risking an EEOC lawsuit by “requiring” every employee to do this against their will, subject to a large fine?

ANS: Refused to answer

Provision #4: Isn’t this the same as saying “If you sign up for two years, we’ll give you a third year maybe at a 20% discount if you do everything perfectly, but by doing so you waive your right to cancel after one or two years” ?

ANS: Refused to answer

Provision #5: Has any customer of Viverae or any other wellness vendor with 1000 or more employees completed HRAs and submitted to biometric screens at a 100% rate, as you require in Provision #2?

ANS: Refused to answer

Provision #6: How could a health plan get a positive return on this program by offering people $720 apiece, when wellness-sensitive medical events account for less than $200/person in claims spend?

ANS: Refused to answer

Speaking of Provision #6, if your very own website says savings are $500/person (I’d be curious what legitimate academic research supports that), how can you guarantee savings when the cost of the incentive alone is $720?

ANS: Refused to answer

Dee Edington Drains The Life Out Of The Vitality Group’s Distortion Of His Work

The Vitality Group

Short Summary of Company:

“Vitality is an active, fully integrated wellness program designed to engage your employees on their Personal Pathway to better health. Employers can choose to introduce the Vitality experience with one of our comprehensive plans. Activate is designed to bring wellness into the workplace. Elevate includes all the components of Activate, plus additional engagement features.”

Materials Being Reviewed

The Vitality Group “wearables at work” presentation. This presentation describes the health risk reduction achievable through engaging members at workplaces by wearing activity trackers.

Summary of key figures and outcomes:

Questions for Vitality Group:

You appear to be claiming that people who are “not active” reduced their risk factors simply by being engaged, without actually doing or reporting anything. A health services researcher might say that instead of taking credit for both the 6-point decline in the study group and the 5-point decline in the de facto control group risk, in reality only the difference between the two groups (1 point) could be attributable to fitness activities. If you disagree, can you explain exactly what it is that makes people in the inactive group so successful even if they don’t do anything?

ANS:

The amount that could be attributable to fitness activities is the difference between the two groups compared. For clarification, we compared (1) individuals who were engaged in fitness activities with the Vitality program (who might also be using other program elements), with (2) those who were engaged in the Vitality program on other elements but were not recording fitness activities directly with us.

So the graphic focused only on the incremental difference between the described fitness and non-fitness cohorts. Both the fitness and non-fitness cohorts were participating in other aspects of the Vitality program to track and improve their health, but the non-fitness group did not record their fitness activities through Vitality. Individuals in the non-fitness group may also have engaged in some fitness activities but simply did not log any of these activities through the Vitality program.

Observation::

Thank you for that clarification. When I look at the “difference between the two groups compared” I am seeing a 5-point decline in the first group and a 6-point decline in the second group, netting out to 1% as an “incremental difference,” rather than the 13% and 22% declines you claim,, but perhaps readers will see it differently.

How does your claim of success adjust for dropouts, and the likelihood that dropouts would have worse performance than people who were willing to be measured twice?

ANS:

This analysis did not include an adjustment for dropouts as the intent was not to make assumptions about unknown risk factors. A more detailed investigation could include this as a refinement.

Are you familiar with the concept of the “natural flow of risk” described on this slide researched and prepared by the “father of wellness measurement,” Dee Edington?

Edington’s research shows that nearly 50% of people with >4 risk factors will eventually move to a lower risk category on their own. Having been exposed to this “natural flow of risk” data, do you still believe that the non-active and active members (both groups were selected on the basis of having >4 risk factors) declined in risk due to the program, or else could some or all of the decline be due to (a) self-selection into the active group; (b) ignoring discouraged dropouts; and (c) the natural flow of risk?

Response:

Yes, we did allow for this effect by looking at the net changes in overall risk groupings by level of activity in the Vitality program. In other words, the percentages shown account for the overall flow of risk, including those who improved over the period but also those who deteriorated. The graphic focused on the proportion of high risk people in each group, but did allow for people moving into the group over the period.

Dee Edington’s work found that expected natural migration is actually a deterioration in risk groups as people naturally flow to high risk.

Often there is a tendency in wellness to compare consistent cohort risk transitions to these expected natural migration increases. Although both cohorts in the analysis saw an overall net improvement in risk groups, this comparison to natural migration was not the intent of this analysis. Instead the intent was to compare the relative changes in the two cohorts. This analysis showed that the cohort who engaged in fitness activities through Vitality had a lower proportion of high risk individuals as of their first risk measure, but had a greater net improvement in risk groups as of the last measure than those who did not engage in fitness activity through Vitality

Observation:

Hmm…well we can’t both be right. I’m looking at the exact same Dee Edington slide you are, but I am seeing the population’s risk “naturally flow” in both directions, not just “a deterioration in risk groups as people naturally flow to high risk.” Obviously the validity of the alleged declines in your cohorts is dramatically different depending on whether one uses your interpretation of Dr. Edington’s work (in which case your results are outstanding) or mine (in which case except for 1%, they are due to the natural flow downward of the highest-risk segment).

Like Alvy Singer did with Marshall McLuhan in Annie Hall, I took the liberty of asking Dee Edington himself to referee our disagreement. This is his response:

“The correct interpretation of that slide and of my work is that the natural flow of risk in a population moves in both directions, and must be understood in order to gauge impact of an intervention. It is not valid to simply start with people who were high-risk and claim credit for all risk reduction in that cohort while ignoring people who migrate in the other direction.”

Wellnet Detects Undetected Claims Costs

Wellnet

Short Summary of Intervention:

Risk reduction program. “Our company’s focus is on exceptional execution and the manner in which health benefits are delivered and managed. Healthcare is personal and we treat it that way. Our mission to provide a level of service, collaboration and integration you will not find elsewhere in the marketplace.”

Materials Being Reviewed:

Summary of key figures and outcomes:

- 18-to-1 ROI

- $463,000 reduction ($180 per person) in medical spending, on a base of about $6 million.

- $21 million reduction in “undetected claims costs” on 55 high-risk members ($4 million) and 453 medium-risk members ($17 million).

- Medical trend reduction from 8% to 0.06%

Questions for Wellnet:

What are “undetected claims costs”? We can’t find an insurance company that has heard of them, and we can’t find any definition on Google, or even any reference to them at all, other than Wellnet’s.

ANS: Refused to answer

It’s not clear whether the 18-to-1 ROI is driven by the $180/person reduction in medical spending or the $21 million reduction in “undetected claims cost.” If the former, does that mean your wellness program only cost $10 per person?

ANS: Refused to answer

If the latter, how does the $21 million in “undetected claims costs” relate to the $6 million in detected claims costs?

ANS: Refused to answer

You list 508 medium-risk and high-risk members whose risk reduction accounted for the $21 million in “undetected claims costs.” Is it possible that many of the unmentioned 2000 employees and dependents who are low-risk might increase risk factors and therefore offset those savings, as Dee Edington’s model below would predict?

ANS: Refused to answer

By changing the axes on the graphs so that the cost bars are not drawn to scale, wouldn’t the physical difference in the height of the bars (about 50%) appear to dramatically overstate the savings (about 7.3%)? Doesn’t omitting the “$5.0” hashmark on the top graph exacerbate this effect even more?

ANS: Refused to answer

How does the 7.3% negative spending trend on the lower graph tie to the 0.06% positive spending trend claimed in the first section?

ANS: Refused to answer

On just the 55 high-risk members alone, you are saving $73,000 apiece, about 4 hospitalizations each. Can you share how this might be possible to do, through your wellness tools?

ANS: Refused to answer

HealthFitness takes credit for program savings without having a program

HealthFitness

Short Summary of Intervention:

“When you partner with HealthFitness, we work collaboratively with you to develop a strategic plan for program implementation, which includes a cultural assessment and an operational plan. You can expect results-oriented programs and services delivered through a highly personalized strategy, matched to your employees and culture.”

Materials Being Reviewed:

Success at risk reduction and translation of that risk reduction into cost savings. These excerpts are from the successful Koop Award application at http://www.thehealthproject.com/documents/2011/EastmanEval.pdf.

Summary of key figures and outcomes:

- Reduction in risk factors from 3.20 to 3.03 — net change of 0.17 — over 5 years. This success excludes dropouts.

- 24% improvement in costs vs. non-participants, or $460/year at Eastman Chemical (currently up to >$500/year according to HFC website)

Questions for Health Fitness Corporation:

Since only about 20% of all inpatient events are wellness-sensitive, and you only reduced risk factors by 0.17 per person, and hospital expenses are at most 50% of total spending, how is it that you are able to reduce spending by 24%?

ANS: Refused to answer

Why did you take credit for savings in 2005, even though according to your own slide you didn’t have a program in 2005?

ANS: Refused to answer

Does starting the Y-axis at $1800 instead of $0 create the illusion of greater separation between the two cohorts?

ANS: Refused to answer

Your website says that comparing participants to non-participants “adheres to statistical rigor and current scientific standards for program evaluation” and “is recognized by the industry as the best method for measurement in a real-world corporate wellness program.” Can you explain how non-motivated non-volunteers who decline financial incentives to improve their health are comparable to motivated volunteers, especially in light of the separation between the two groups that took place just on the basis of differential mindset in 2005, before you had a program?

ANS: Refused to answer

You and your customers have won three Koop Awards in the last 4 years. Do you think also being a sponsor of the Koop Award (along with Eastman, in this case) has helped you win these awards or is this just a coincidence?

ANS: Refused to answer

Why Nobody Believes the Numbers defines the “Wishful Thinking Multiplier” as “alleged cost saviings divided by alleged risk reduction.” Your cost savings is $460 and your risk reduction in 0.17, for a Wishful Thinking Multiplier of 2700, the highest in the industry. The book calculates that a risk reduction of your magnitude (even assuming dropouts also reduced risk by the same amount) could generate roughly a $8 reduction in annual spending. To what do you attribute your ability to reduce spending by 50x what is mathematically possible?

ANS: Refused to answer

Help us with the arithmetic below, also from this Koop Award application.

How is it mathematically possible to have a higher ROI ($3.62) when also including the cost of incentives in program expense than the ROI ($3.20) excluding the cost of paying incentives to employees to participate?

ANS: Refused to answer

Update December 2014: Ron Goetzel admits HFC lied. (See #5 and #6.) The slide was “unfortunately mislabeled,” using the passive voice, as though it was an act of God (“the game was rained out” ) or else perhaps the North Koreans. The geniuses at HFC apparently didn’t notice this “unfortunate mislabeling” for 4 years, despite it’s having been pointed out to them many times before this.